Develop a Volatility Plugin to Recover ML Models

The goal is to create a Volatility3 plugin that will traverse through the memory image to find PyTorch models and extract their attributes and data. The runtime state of a Python process is managed by a structure called PyRuntimeState. How can you locate this object in your memory image?

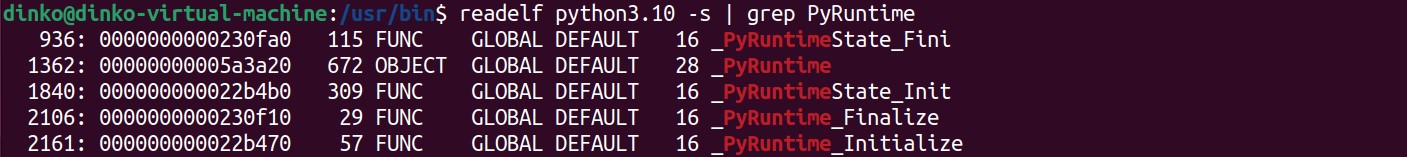

- Find the Python3 exe in your file system. Now use readelf with the -s flag to output the symbol table of the file. Pipe the output through grep, searching for ‘PyRuntime’.

Note: The symbol table provides you with offsets to structures inside the process.

/usr/bin$ readelf python3.10 -s | grep PyRuntime

The PyRuntimeState structure can be found by adding the offset (0x05a3a20) to the base address of the executable’s VMA (0x555555554000). The result is 0x555555AF7A20.

- To find objects, make use of the fact that Python has built-in garbage collection (GC). Python’s GC protocol manages a _gc_runtime_state object. In Python3.8 and below, the GC object used to be stored directly in PyRuntimeState, but it is now stored in PyInterpreterState. By scouring the core source files, you would establish the following sequence:

-

pointer to PyInterpreterState at offset 40 (bytes) of PyRuntimeState.

-

_gc_runtime_state at offset 616 of PyInterpreterState.

-

pointer to head of the GC object list, at offset 24 of _gc_runtime_state.

-

Note: In this example, I am using Python v3.10.6. Your offsets might vary depending on your version of Python, so select the correct branch in GitHub.

At this point, it is as simple as traversing the DLL of PyGC_Head objects. For reference, here is the memory layout of Python objects (GC design):

Python Object Structure

+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+ \

| *_gc_next | |

+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+ | PyGC_Head

| *_gc_prev | |

object -----> +--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+ /

| ob_refcnt | \

+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+ | PyObject_HEAD

| *ob_type | |

+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+--+ /

| ... |

- Start VolShell, an interactive tool of Volatility. It allows you to manually traverse through memory and confirm the offsets you calculated.

/volatility3$ python3 volshell.py -f ../LiME/images/memdump.lime -l

Volshell (Volatility 3 Framework) 2.4.2

Readline imported successfully Stacking attempts finished

Call help() to see available functions

Volshell mode : Linux

Current Layer : layer_name

Current Symbol Table : symbol_table_name1

Current Kernel Name : kernel

(layer_name) >>>

- Change the context to the correct process. Now you can reference addresses relative to the starting VMA of process 3391.

(layer_name) >>> ct(pid=3391)

(layer_name_Process3391) >>>

- Use the db function to display bytes. Walk down the sequence you found in step 2, calculating addresses along the way. Visually check the locations of pointers/addresses and other fields in the memory image to confirm they are where you expect them to be.

Notes:

- Be aware of the architecture’s endianness

- I have marked the correct pointers with ‘^’ characters

- I have shortened some of the outputs with “…”

(layer_name_Process3391) >>> db(0x555555AF7A20, 48)

0x555555af7a20 00 00 00 00 01 00 00 00 01 00 00 00 01 00 00 00 ................

0x555555af7a30 00 00 00 00 00 00 00 00 a0 e2 b3 55 55 55 00 00 ...........UUU..

0x555555af7a40 70 fa b3 55 55 55 00 00 70 fa b3 55 55 55 00 00 p..UUU..p..UUU..

^^^^^^^^^^^^^^^^^^^^^^^

(layer_name_Process3391) >>> db(0x555555b3fa70, 720)

0x555555b3fa70 00 00 00 00 00 00 00 00 20 70 d6 5a 55 55 00 00 .........p.ZUU..

0x555555b3fa80 20 7a af 55 55 55 00 00 00 00 00 00 00 00 00 00 .z.UUU..........

0x555555b3fa90 ff ff ff ff ff ff ff ff 00 00 00 00 00 00 00 00 ................

0x555555b3faa0 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 ................

0x555555b3fab0 e8 03 00 00 00 00 00 00 00 00 00 00 00 00 00 00 ................

0x555555b3fac0 f0 b5 b5 55 55 55 00 00 00 00 00 00 00 00 00 00 ...UUU..........

0x555555b3fad0 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 ................

...

0x555555b3fce0 00 00 00 00 01 00 00 00 00 00 00 00 00 00 00 00 ................

0x555555b3fcf0 f0 e6 6f 51 ff 7f 00 00 b0 49 29 54 ff 7f 00 00 ..oQ.....I)T....

0x555555b3fd00 bc 02 00 00 79 01 00 00 50 89 fa 5a 55 55 00 00 ....y...P..ZUU..

0x555555b3fd10 90 d3 6f 51 ff 7f 00 00 0a 00 00 00 06 00 00 00 ..oQ............

0x555555b3fd20 70 80 d0 f6 ff 7f 00 00 f0 bd 7b 51 ff 7f 00 00 p.........{Q....

0x555555b3fd30 0a 00 00 00 02 00 00 00 f0 fc b3 55 55 55 00 00 ...........UUU..

^^^^^^^^^^^^^^^^^^^^^^^

(layer_name_Process3391) >>> db(0x555555b3fcf0)

0x555555b3fcf0 f0 e6 6f 51 ff 7f 00 00 b0 49 29 54 ff 7f 00 00 ..oQ.....I)T....

^^^^^^^^^^^^^^^^^^^^^^^

0x555555b3fd00 bc 02 00 00 79 01 00 00 50 89 fa 5a 55 55 00 00 ....y...P..ZUU..

0x555555b3fd10 90 d3 6f 51 ff 7f 00 00 0a 00 00 00 06 00 00 00 ..oQ............

0x555555b3fd20 70 80 d0 f6 ff 7f 00 00 f0 bd 7b 51 ff 7f 00 00 p.........{Q....

0x555555b3fd30 0a 00 00 00 02 00 00 00 f0 fc b3 55 55 55 00 00 ...........UUU..

0x555555b3fd40 40 fd b3 55 55 55 00 00 40 fd b3 55 55 55 00 00 @..UUU..@..UUU..

0x555555b3fd50 00 00 00 00 00 00 00 00 87 01 00 00 00 00 00 00 ................

0x555555b3fd60 cd 02 00 00 00 00 00 00 00 00 00 00 00 00 00 00 ................

(layer_name_Process3391) >>> db(0x7fff516fe6f0)

0x7fff516fe6f0 50 e1 6f 51 ff 7f 00 00 f0 fc b3 55 55 55 00 00 P.oQ.......UUU..

^^^^^^^^^^^^^^^^^^^^^^^

0x7fff516fe700 01 00 00 00 00 00 00 00 a0 66 3c 59 55 55 00 00 .........f<YUU..

0x7fff516fe710 00 00 00 00 00 00 00 00 20 2f 13 5b 55 55 00 00 ........./.[UU..

...

# At this point, you are at the 2nd object and can continue traversing via _gc_next pointers

I wrote a plugin - PyListObjects.py - to automate the traversal of GC objects and output the object types and virtual addresses. Here is how you can do it:

- Create a new .py file in volatility3/frameworks/plugins/. Plugin classes must inherit from PluginInterface. Specify the plugin and framework versions, and provide an implementation of the requirements method (classmethods run before class instantiation). The module req specifies the required symbol table (kernel symbols) and translation layer architecture (Intel64). The plugin req indicates the use of another plugin (recall pslist). Finally, list requirements specify arguments that must be provided at the start of execution.

from volatility3.framework import interfaces

from volatility3.framework import renderers

from volatility3.framework.configuration import requirements

from volatility3.framework.objects import utility

from volatility3.plugins.linux import pslist

class PyListObjects(interfaces.plugins.PluginInterface):

_version = (1, 0, 0) # Plugin version

_required_framework_version = (2, 0, 0) # Volatility version

@classmethod

def get_requirements(cls):

return [

requirements.ModuleRequirement(

name = "kernel",

description = "Linux kernel",

architectures = ["Intel64"],

),

requirements.PluginRequirement(

name = "pslist", plugin=pslist.PsList, version=(2, 0, 0)

),

requirements.ListRequirement(

name = "pid",

description = "PID of the Python process in question",

element_type = int,

optional = False,

),

requirements.ListRequirement(

name = "PyRuntime",

description = "Offset of the PyRuntime symbol from your system's Python3 executable",

element_type = str,

optional = False,

),

]

- The run() method is the entry point of a Volatility plugin (after requirements are satisfied). Here, you utilize two pslist classmethods to get a list of tasks that correspond to the pid(s) provided. That list is passed into the plugin workhorse, the _generator() method. A TreeGrid object is returned to specify the columns and data types Volatility should output. The output instances come from calls to yield().

def run(self):

filter_func = pslist.PsList.create_pid_filter(self.config.get("pid", None))

return renderers.TreeGrid(

[

("PID", int),

("Process", str),

("ObjectPtr", str),

("Type", str)

],

self._generator(

pslist.PsList.list_tasks(

self.context,

self.config["kernel"],

filter_func = filter_func

)

),

)

Notes:

- Refer to the Volatility docs for available functions, attributes, etc.

- Read the documentation/comments in my repo for more details

- Acquire the first task in the list, because you expect to be given only one pid corresponding to the ML process. Return gracefully if the task is null or has no memory mapping. Next, recall the output of linux.proc. The mappings are given chronologically according to start address, and the first one will be the Python ELF. Create a process layer (a set of translational layers between virtual and physical address spaces) to use throughout the plugin. Finally, calculate the garabage collector address and call a get_objects() function to encapsulate functionality.

def _generator(self, tasks):

task = list(tasks)[0]

if not task or not task.mm:

return

task_name = utility.array_to_string(task.comm)

vma = list(task.mm.get_mmap_iter())[0] # first memory mapping is the Python executable/interpreter

vma_start = vma.vm_start # base virtual address

path = vma.get_name(self.context, task)

task_layer = task.add_process_layer()

curr_layer = self.context.layers[task_layer]

pyrunstr = self.config.get('PyRuntime', None)[0] # 'PyRuntime' arg from command line

try:

PyRuntimeOffset = int(pyrunstr, 16) # offset of PyRuntimeState in the executable's VMA

except ValueError:

print("Invalid pyruntime hexadecimal string: ", pyrunstr)

PyRuntimeState = vma_start + PyRuntimeOffset

PyIntrpState = int.from_bytes( # PyIntrpState ptr at offset 40 bytes

curr_layer.read(PyRuntimeState + 40, 8),

byteorder='little'

)

PYGC_HEAD_OFFSET = 640 # first gc_generation at offset 640 bytes

''' https://github.com/python/cpython/blob/v3.10.6/Include/internal/pycore_gc.h#L140) '''

object_map = get_objects(curr_layer, PyIntrpState, PYGC_HEAD_OFFSET)

- Create a dict that will map from address (unique) to type name. For each of the three GC generations, traverse through the doubly linked list and acquire the type name by accessing the PyTypeObject of each GC object. Add each entry into the dict/map with the address of PyGC_Head + 16 (bytes) because you want the base address of the PyObject itself.

Notes:

- Every object in Python has a type specified by a PyTypeObject

- Find the type name

- Although you could create your own objects to represent structures in memory (as you will see in the next plugin), here, it is smart optimize for runtime performance by reading and interpreting the bytes manually. Otherwise, you would be creating tens of thousands of GC objects for a marginal benefit in abstraction.

def get_objects(curr_layer, PyIntrpState, PyGC_Head_Offset):

object_map = {}

for i in range(3): # 3 GC generations (separated by 24 bytes)

PyGC_Head = int.from_bytes(

curr_layer.read(PyIntrpState + PyGC_Head_Offset, 8),

byteorder='little'

)

GC_Stop = int.from_bytes( # TAIL of the circular doubly linked list

curr_layer.read(PyGC_Head + 8, 8),

byteorder='little'

)

while PyGC_Head != GC_Stop:

ptr_next = int.from_bytes( # next GC object

curr_layer.read(PyGC_Head, 8),

byteorder='little'

)

ptr_type = int.from_bytes( # ptr to PyTypeObject

curr_layer.read(PyGC_Head + 24, 8),

byteorder='little'

)

ptr_tp_name = int.from_bytes( # ptr to type name

curr_layer.read(ptr_type + 24, 8),

byteorder='little'

)

tp_name = hex_bytes_to_text(curr_layer.read(ptr_tp_name, 64, pad=True))

object_map[hex(PyGC_Head + 16)] = tp_name

PyGC_Head = ptr_next

PyGC_Head_Offset += 24

return object_map

- Back in _generator(), output the object map entries to Volatility using yield() and to a text file for ease of searching.

with open("./PyListObjects.txt", 'w') as f:

for k,v in object_map.items():

f.write(json.dumps((k, v)))

f.write('\n')

yield (0,

(

task.pid,

task_name,

k,

v,

),

)

You can see the example output of my memory image here. If you search for the addresses you obtained through the debugger, you will find them:

["0x7fff5160e890", "DataLoader"]

["0x7fff5160eb90", "SGD"]

["0x7fff5160ea70", "Net"]

Note: Take a second to see what other objects were found, such as Tensor and Parameter.

Now that you found all objects, move to investigate the convolutional neural net. The plugin I created for this is PyTorchFind.py.

- Follow the same workflow. The get_requirements() and run() methods are generally the same. The first notable difference is the use of symbols.

python_table_name = PythonIntermedSymbols.create(

self.context, self.config_path, sub_path="generic", filename="python-3.10-x64"

)

pytorch_table_name = PyTorchIntermedSymbols.create(

self.context, self.config_path, sub_path="generic", filename="pytorch-2.0-x64"

)

This code shows the instantiation of symbol tables for Python and PyTorch types. These tables are called upon to provide symbol information (class, attributes, and functions).

- Create a file for each symbol table. Inherit from one of Volatility’s symbol table base classes (this one reads JSON for type definitions). Bind each of your types to a class.

from volatility3.framework.symbols import intermed

from volatility3.framework import objects, constants

class PythonIntermedSymbols(intermed.IntermediateSymbolTable):

"""

Symbol table for Python types.

- Developed for PyTorch 3.10.6 on x64 architectures

- Operability with other versions is not guaranteed

"""

def __init__(self, *args, **kwargs):

super().__init__(*args, **kwargs)

self.set_type_class("PyGC_Head", PyGC_Head) # https://github.com/python/cpython/blob/v3.10.6/Include/internal/pycore_gc.h#L20

self.set_type_class("PyObject", PyObject) # https://github.com/python/cpython/blob/v3.10.6/Include/object.h#L109

self.set_type_class("PyTypeObject", PyTypeObject) # https://github.com/python/cpython/blob/v3.10.6/Include/cpython/object.h#L191

self.set_type_class("PyDictObject", PyDictObject) # https://github.com/python/cpython/blob/v3.10.6/Include/cpython/dictobject.h#L28

self.set_type_class("PyDictKeysObject", PyDictKeysObject) # https://github.com/python/cpython/blob/main/Include/internal/pycore_dict.h#L72

self.set_type_class("PyDictKeyEntry", PyDictKeyEntry) # https://github.com/python/cpython/blob/main/Include/internal/pycore_dict.h#L28

self.set_type_class("PyASCIIObject", PyASCIIObject) # https://github.com/python/cpython/blob/3.10/Include/cpython/unicodeobject.h#L219

self.set_type_class("PyBoolObject", PyBoolObject) # https://github.com/python/cpython/blob/v3.10.6/Include/boolobject.h#L22

self.set_type_class("PyLongObject", PyLongObject) # https://github.com/python/cpython/blob/v3.10.6/Include/longintrepr.h#L85

self.set_type_class("PyTupleObject", PyTupleObject) # https://github.com/python/cpython/blob/v3.10.6/Include/cpython/tupleobject.h#L11

For example, here is the JSON structure for a PyDictKeyEntry object. It specifies structure fields, field types, and relative offsets.

"PyDictKeyEntry": {

"fields": {

"me_hash": {

"offset": 0,

"type": {

"kind": "base",

"name": "unsigned long long"

}

},

"me_key": {

"offset": 8,

"type": {

"kind": "pointer",

"subtype": {

"kind": "struct",

"name": "PyASCIIObject"

}

}

},

"me_value": {

"offset": 16,

"type": {

"kind": "pointer",

"subtype": {

"kind": "struct",

"name": "PyObject"

}

}

}

},

"kind": "struct",

"size": 24

}

This is the corresponding class implementation. Add any functionality you need the object to perform. The get_key() method dereferences the key pointer, which is specified to be a PyObject pointer, and then calls get_value() of the PyObject class.

class PyDictKeyEntry(objects.StructType):

def get_key(self):

"""

Retrieves the value of the dict key (ASCII str).

"""

return self.me_key.dereference().get_value()

As you develop the plugin, incrementally add types to your JSON specifications and symbol tables. Familiarize yourself with my implementation of these files to understand what follows.

- Adding to the get_objects() method, catch the neural network objects. Instantiate the objects using the classes you defined for the symbol table. Here, you might use the PyInstanceObject class since PyTorch model instances are guaranteed to have a _dict_. In Python, this dictionary holds all the fields of the object.

if tp_name == "Net":

model = context.object(

object_type=python_table_name + constants.BANG + "PyInstanceObject",

layer_name=curr_layer.name,

offset=PyGC_Head + 16,

)

models.append((tp_name, model))

- For each model, call a function to extract the layers. Begin by dereferencing the dict pointer to get the PyDictObject. The get_dict() function recreates the Python dictionary. A model is made up of layers, represented by modules in PyTorch. From PyTorch source, you know that the dict should contain a _modules field, which is another dict. Obtain the modules dict and see that each entry corresponds to a layer of the model.

def get_modules(model):

"""Acquires the modules (ie. layers) of the ML model.

Args:

model: ML model of interest as a PyInstanceObject

Returns:

A list of tuples: (module name, module object)

"""

modules = []

model_name, model_object = model[0], model[1]

model_dict = model_object.dict.dereference().get_dict()

modules_dict = model_dict['_modules']

for module in modules_dict:

modules.append((model_name + '.' + module, modules_dict[module]))

return modules

Example Printout:

('Net.conv1', <StructType python-3.10-x641!PyInstanceObject: layer_name_Process3391 @ 0x7fff5160eaa0 #24>)

('Net.pool', <StructType python-3.10-x641!PyInstanceObject: layer_name_Process3391 @ 0x7fff5160ead0 #24>)

('Net.conv2', <StructType python-3.10-x641!PyInstanceObject: layer_name_Process3391 @ 0x7fff5160eb00 #24>)

('Net.lin1', <StructType python-3.10-x641!PyInstanceObject: layer_name_Process3391 @ 0x7fff5160eb30 #24>)

- For all modules, begin once more by acquiring the object’s dictionary. Through experimentation, you would find that dict fields beginning with a ‘_’ correspond to methods and dicts, while others are raw attributes. Extract the values of the raw attributes by implementing new symbols for each type (Ex. tuple, long, str). Next, PyTorch source indicates there should be a _parameters dictionary. This is where tensors such as weights and biases are stored. For each parameter, instantiate your objects, dereference the tensor pointer, and get the tensor’s data/values.

Note: See my PyTorch symbols for the memory repesentation of these objects.

def get_tensors(context, curr_layer, pytorch_table_name, modules):

"""Acquires and displays the tensors and attributes of each module.

https://github.com/pytorch/pytorch/blob/v2.0.0/torch/csrc/api/include/torch/nn/module.h#L43

Args:

modules: list of the ML model modules/layers as PyInstanceObjects

Returns:

A list of lists of tuples: (parameter name, parameter data)

stdout: Attributes and parameters of each module/layer

"""

params = []

info = ''

for module_name, module_object in modules:

module_dict = module_object.dict.dereference().get_dict()

info += "\n-----------------------------------------------------------------------------------------------------\n\n"

info += module_name + " Attributes: \n\n"

for key in module_dict:

if not key.startswith('_'):

info += key + ': ' + str(module_dict[key]) + '\n'

param_dict = module_dict['_parameters']

buffer_dict = module_dict['_buffers']

for k in param_dict:

param_name = module_name + '.' + k

info += '\n' + k.capitalize() + '\n\n'

param = context.object(

object_type=pytorch_table_name + constants.BANG + "Parameter",

layer_name=curr_layer.name,

offset=param_dict[k].vol.offset,

)

tensor = param.data.dereference()

num_elements = tensor.num_elements

tensor_data = tensor.get_data()

params.append((param_name, tensor_data))

info += "num_elements: " + str(num_elements) + '\n'

info += "data_type: " + tensor.get_type()[0] + '\n\n'

info += str(tensor_data) + '\n'

return info

Example Printout:

Net.conv1 Attributes:

training: True

in_channels: 3

out_channels: 6

kernel_size: (5, 5)

stride: (1, 1)

padding: (0, 0)

dilation: (1, 1)

transposed: False

output_padding: (0, 0)

groups: 1

padding_mode: zeros

Weight

num_elements: 450

data_type: float

[-12.968002319335938, 4.591634678053128e-41 ... ]

The bulk of the work lies in creating the symbol classes that extract and make sense of bytes in memory. Source code is your friend and the only reliable reference for low level implementation details. You have successfully dissected PyTorch ML processes and recovered invaluable model information.

Usage:

/volatility3$ bash getpyruntime.sh | xargs -t python3 vol.py -f ~/downloads/memdump.lime linux.pylistobjects --pid 3391 --PyRuntime